|

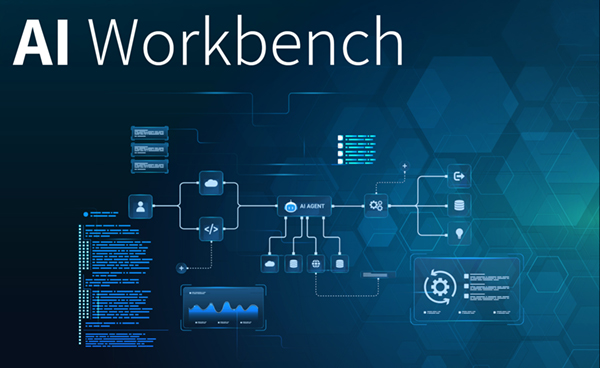

Industrial AI Applications with AI Workbench from PROSTEPBy Norbert Lotter The major challenge when using artificial intelligence (AI), and in particular when using large language models, is generating reproducible results. To ensure that this is the case, PROSTEP's AI Workbench combines deterministic program code with AI functionality. Thanks to predefined modules, even complex processes can be mapped quickly and reliably.

According to estimates, engineers spend over a third of their working hours searching for information and similar activities. This is due in part to the fact that they normally have to collect information from a large number of different source systems and filter out reliable data from a deluge of information. This is where large language models (LLM) can save an enormous amount of time, provided they deliver reliable and reproducible results. However, ChatGPT and similar chatbots are known to occasionally "hallucinate", i.e. produce results that are incorrect or even fictitious. This has to do with how language models work as they are geared towards probability and plausibility but not necessarily towards correct answers. They lack requisite factual knowledge. A possible solution to this dilemma, which PROSTEP is currently working on, is combining deterministic program code and AI functions with the aim of guaranteeing the reproducibility of the results. PROSTEP's AI Workbench is a modular system that can be used to implement powerful AI applications with a minimum of in-house programming. It can be used as a simple chatbot to answer user queries about the documentation of a software application, for example. But the toolkit is also powerful enough to be used to build an AI-based agent that is able to take on more complex tasks such as evaluating data from different source systems based on an ontology. The same technology can be used to map AI use cases on the basis of LLMs without having to reinvent the wheel time and time again.

Information Retrieval and AI Orchestration The AI Workbench is primarily comprised of three components. First of all, a simple web front-end that can easily be expanded to include additional interface functions; secondly, information retrieval functions for reading data into a chunk database and processing it for use by AI; and thirdly, AI orchestration, which uses complex algorithmic processes that combine AI functionality and deterministic program code for queries instead of single prompts. The application not only uses AI workflows for the purpose of orchestration but also for information retrieval. In the case of demanding tasks such as complex support requests, expert advice or automated analyses, it must be possible for AI to access the relevant information quickly. This requires a powerful semantic search. The basis for this is provided by an information retrieval function that comprises three steps. First of all, the data and documents are imported, regardless of whether they are in PDF, JSON or another format or a text file. Import can, however, also be performed from other data management systems, e.g. using our OpenPDM integration technology. The raw data is then classified, summarized, keyworded, indexed, interlinked, etc. with the aim of determining more precisely and easily the volume of information that is to be used by an LLM to answer a question. AI can also be used for this purpose, e.g. to semantically evaluate preliminary result sets. But the core of the search functionality uses deterministic code to search the prepared data lexically (e.g. with Elasticsearch) or semantically (e.g. with a vector index). To ensure reproducible results, the application uses a combination of three technical capabilities during AI orchestration. First of all, the prompts are structured systematically and divided into specific sections that describe, for example, the context, the actual query or additional input data from earlier processes and the results. The individual prompts are then linked to create an algorithmic process. This involves the use of constructs such as sequences, loops (while/until) and conditional execution (if/then/else, switch/case), which make it possible to create a structured sequence of AI interactions using the results of one interaction as input for the next interaction. The sequence of action patterns does not have to be programmed as it can be generated declaratively instead and also allows the execution of deterministic code at any point in the sequence. The third basic technical capability is what is referred to as "tool calling" and uses the MCP protocol. In principle, it means that an LLM is made aware of remote services. The LLM can call the functions provided by these services to process the current query and use the results as additional input in the prompt.

AI-based Agent for error Analysis The combination of web interface, AI orchestration and information retrieval means that the AI Workbench provides a powerful basis for mapping almost any AI use case. PROSTEP is currently using the toolkit to develop an ontology-based AI agent for the intelligent evaluation of complex PLM datasets that, for example, can answer questions such as which components in a technical system are involved in a fault event. The basis for this is provided by requirements, components, test cases and test results from the Mars Rover project, which are read into the workbench's internal database, processed and linked to each other during information retrieval. The data could, however, just as well come from widely used requirements management, PLM and test management systems. The starting point for the use case is the failure of the Mars Rover after several hours of continuous operation at a certain ambient temperature. The AI agent now needs to answer the question of whether, according to the requirements, the Mars Rover should have functioned normally under these conditions and which components were involved in the failure or should have prevented it. To do this, correlations between data from different sources in the PLM world have to be established using a uniform ontology so that the AI agent automatically recognizes which data it requires to answer the user query, retrieves this data from the database and combines the results to create a coherent answer. In the context of requirements and test management, it is possible to imagine a number of different use cases in which such an AI agent could provide valuable assistance. It could, for example, automate the derivation of test cases from the requirements or automatically convert the description of a test sequence into executable tests. With its AI Workbench, PROSTEP provides a powerful framework that makes it very easy to implement complex AI applications without having to program them from scratch. Powerful components for information retrieval and AI orchestration and the way in which the user interfaces have been designed take on a large part of the work and ensure reliable and reproducible results. This is a key prerequisite for industrial AI applications.

|

|

| © PROSTEP AG | ALL RIGHTS RESERVED | IMPRINT | PRIVACY STATEMENT | YOU CAN UNSUBSCRIBE TO THE NEWSLETTER HERE. |